Secure Gen AI

How to Stop Data Leaks Before

They Leave the Prompt

Here is how to Secure Gen AI.

Gen AI Is Expanding Your Attack Surface Right Now

According to Harmonic’s 2025 report, nearly 22% of files uploaded to Gen AI tools contain sensitive data, and 4.37% of all Gen AI prompts carry confidential information. That is not a fringe risk. That is a systemic exposure problem happening at scale, right now, across your workforce.

To secure Gen AI, you need more than a policy document. You need visibility, detection, and real-time control across every tool your employees use.

What Is Secure Gen AI

A mature Gen AI security program gives your organization the ability to use AI productively while ensuring that sensitive data, intellectual property, and regulated information never leave your environment without authorization.

Real-World Gen AI Security Risks

The risk is not theoretical. The data shows it is already happening. Nearly 70% of organizations identify Gen AI as a top security risk, according to Thales's 2025 report. Yet most security programs have not been updated to account for how AI tools move, process, and retain data. Here is what the exposure actually looks like in practice.

Sensitive Data in Prompts and Uploads

Employees regularly share customer records, financial data, source code, and legal documents with AI tools. Often, this happens because there is no friction, no warning, and no policy enforcement at the point of interaction. The data leaves before anyone realizes it should have been blocked.

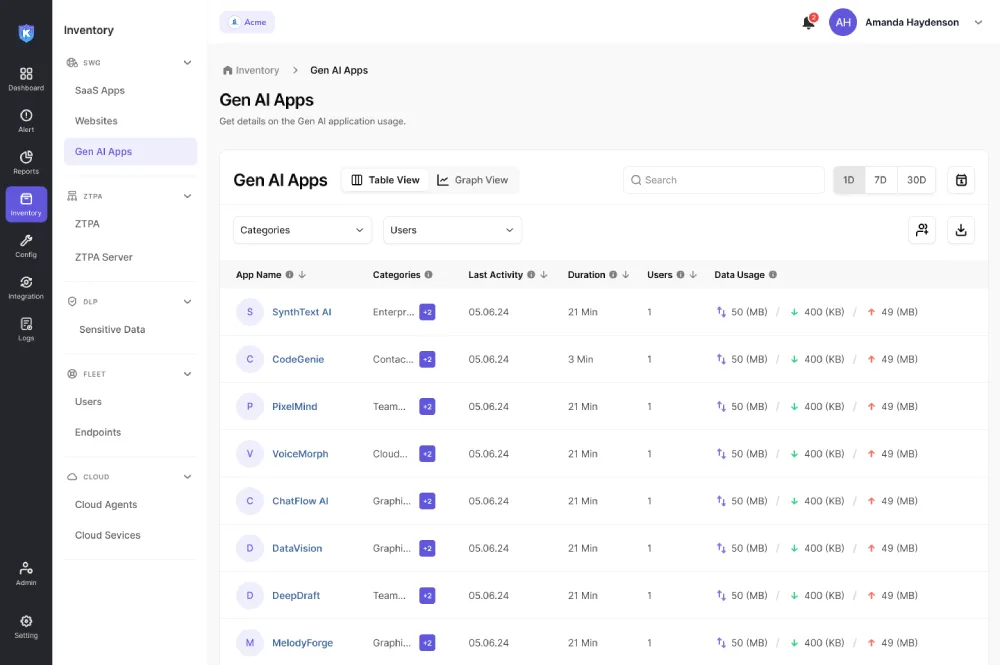

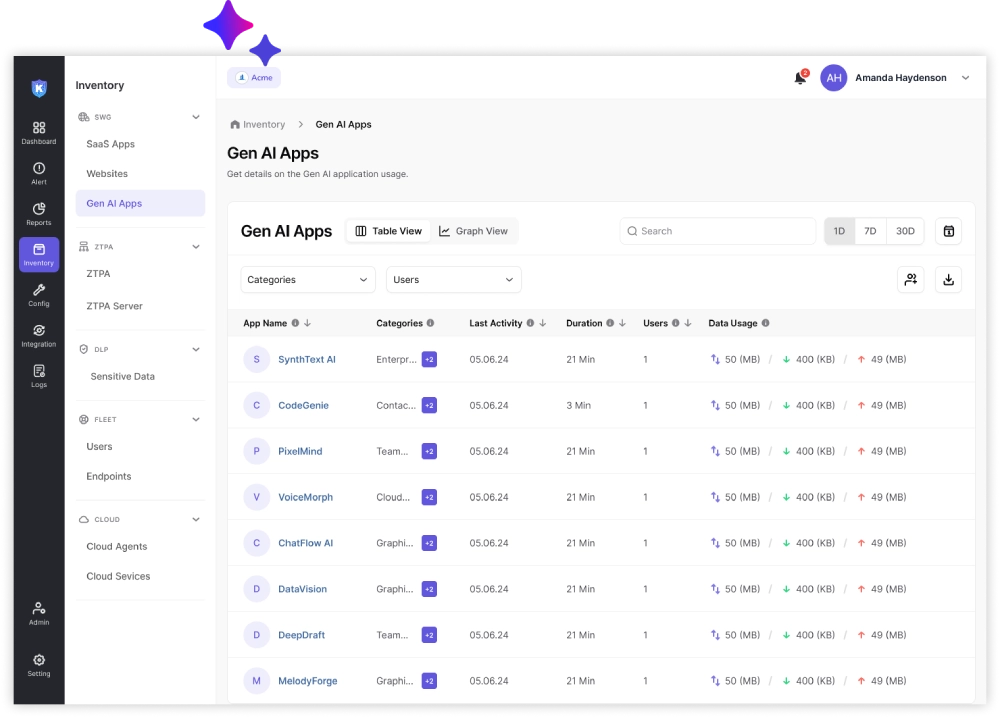

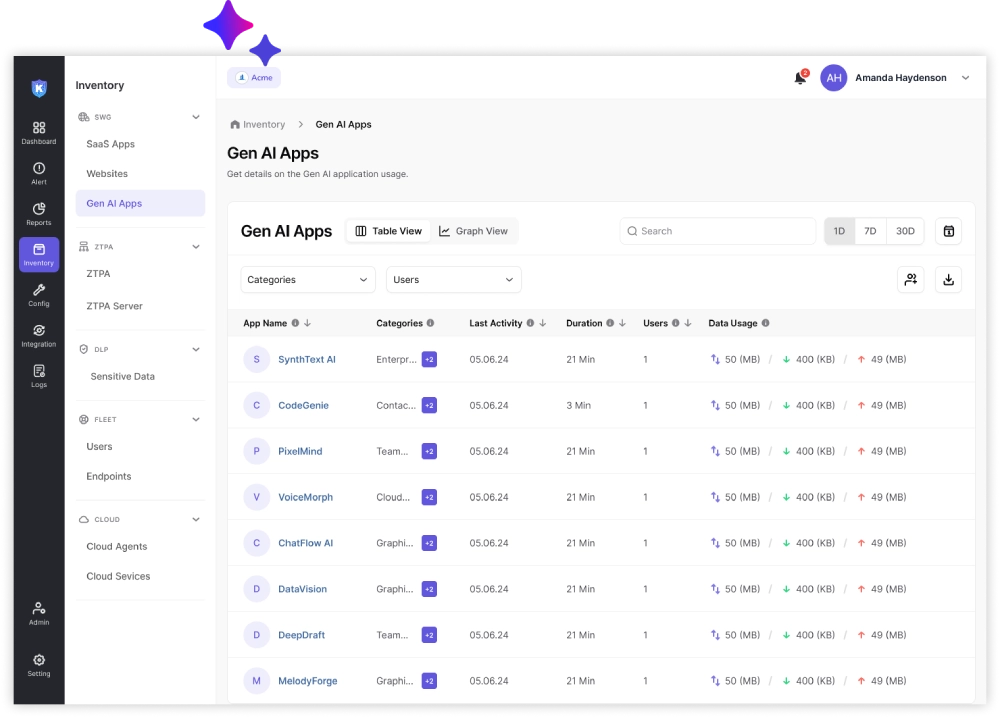

Lack of Visibility into AI Usage

Without endpoint-level visibility, security teams are operating blind. They do not know which AI tools are in use, which employees are using them most heavily, or what categories of data are being shared. That gap makes it impossible to enforce policy or assess risk accurately.

AI-Driven Cyberattacks on the Rise

Attackers are also using AI. AI-powered phishing campaigns, automated vulnerability discovery, and AI-assisted malware generation are all increasing year over year. Your organization needs to account for both how employees use AI and how adversaries are using it against you.

What Defines a Secure Gen AI Framework

Visibility across all Gen AI tools.

You need a complete inventory of every AI application in use across your organization, including tools accessed through the browser, installed as desktop apps, or integrated into existing workflows.

Data classification and detection.

Before you can enforce policy, you need to know what data is sensitive. That means classifying data at rest, in motion, and at the point of interaction with AI tools, including inside prompts and file uploads.

Policy enforcement at endpoint and network level.

Controls need to operate where the data actually moves. Endpoint agents and network inspection working together give you the coverage to block, warn, or log interactions in real time.

Real-time monitoring and control.

Static rules and periodic audits are not fast enough. Gen AI security requires continuous monitoring with the ability to take action in the moment, not after the fact.

Gen AI Security Best Practices

Restrict sensitive data sharing

Define clear categories of data that should never be entered into external AI tools. Communicate those categories to employees and back them up with technical controls.

Implement data classification and tagging

Automated classification helps you enforce policy consistently without relying on individual employees to make the right judgment in every interaction.

Monitor and log Gen AI interactions

Logging prompt activity, file uploads, and AI tool usage gives your security team the forensic record needed to investigate incidents and demonstrate compliance.

Control access to AI tools

Not every employee needs access to every AI application. Role-based access controls applied to AI tools reduce your exposure surface significantly.

Train employees on AI risks

Employees who understand why certain data should not be shared with AI tools are more likely to make good decisions when there is no technical control in place. Training should be specific, practical, and updated regularly.

Core Capabilities of a Gen AI Security Solution

Prompt inspection and filtering

The ability to inspect the content of prompts in real time and apply policy before data is submitted to an AI model.

Data loss prevention for Gen AI

Purpose-built DLP rules that account for the unique patterns of Gen AI interaction, including conversational data entry and document uploads.

User behavior analytics

Baseline modeling of normal AI usage so that anomalous behavior, such as a spike in sensitive data uploads, triggers an alert.

Context-aware policy enforcement

Policy that adapts based on the user, the device, the data classification, and the AI tool in use, rather than applying a single blanket rule.

SaaS and AI app visibility

A unified view of all sanctioned and unsanctioned applications, including AI tools accessed through the browser or integrated into productivity platforms.

How Kitecyber Enables Secure Gen AI

Endpoint-first visibility into Gen AI usage

Data lineage tracking across prompts and files

Real-time control over uploads and interactions

Unified DLP across endpoint, SaaS, and Gen AI tools

Use Cases

Prevent sensitive data exposure in AI tools

Stop employees from sharing customer data, financial records, source code, or legal documents with external AI platforms.

Secure remote and hybrid workforce

Apply consistent policy to all managed devices regardless of location, ensuring that employees working from home have the same controls as those in the office.

Protect intellectual property and source code

Development teams using AI coding assistants are a high-risk group. Kitecyber could provide specific controls for source code and technical documentation.

Ensure compliance with data protection regulations

GDPR, HIPAA, CCPA, and other frameworks require demonstrable controls over how personal and regulated data is handled. AI data security tools help you meet those requirements with audit-ready logging and policy documentation.

Why Traditional Security Tools Fail for Gen AI

Lack of visibility into prompt-level activity

Traditional DLP tools inspect files and emails. They were not built to inspect conversational inputs or monitor how employees interact with AI models in real time.

Fragmented tools across endpoint and cloud

When endpoint security, cloud access security brokers, and web filters operate as separate tools with separate consoles, data leaks fall through the gaps between them.

Delayed detection of data leaks

Many traditional tools rely on log analysis and periodic review. By the time a leak is detected, the data has already been processed by an external AI model. Real-time prevention requires a different architecture.

Benefits of a Unified Gen AI Security Approach

Real-time protection

Catching data exposure at the moment of interaction is more effective than detecting it hours or days later.

Reduced data leakage risk

Consistent policy enforcement across all AI tools reduces the number of incidents that require manual investigation or incident response.

Simplified operations

Managing one platform instead of three or four reduces training burden, reduces alert fatigue, and improves the speed at which security teams can respond to new threats.

Better compliance and governance

A unified platform makes it easier to produce audit reports, demonstrate compliance, and document your AI governance posture to regulators and customers.

Why Kitecyber Stands Apart

Faster deployment without complex infrastructure

Kitecyber is designed for rapid deployment. Organizations could get visibility and control running within days, without requiring major infrastructure changes or long implementation projects.

Lower operational overhead

A unified platform reduces the number of tools your team needs to manage, the number of alerts to triage, and the engineering effort required to maintain integrations between disparate systems.

Unified visibility across all data channels

Endpoint, SaaS, web, and Gen AI activity are visible in a single console. That context allows for smarter policy decisions and faster incident investigation.

Take Control of Gen AI Data Risk

Gen AI is not going away. Your employees will continue to use it, and the productivity benefits are real. The goal is not to block AI. The goal is to use it safely. Kitecyber gives your security team the visibility and control to make that possible.

FAQ's

Frequently asked questions

Kitecyber is designed to support compliance with data protection frameworks including GDPR, HIPAA, and CCPA by providing audit-ready logging of AI interactions, policy documentation, and demonstrable controls over how sensitive data is handled in AI environments.